There is a quiet crisis that has plagued industrial robotics for decades — robots that perform flawlessly in controlled lab environments but stumble the moment they meet a real factory floor. A new partnership between ABB Robotics and NVIDIA is directly attacking this problem, and what they have built together may represent a genuine turning point in how physical AI gets deployed at scale.

The Gap That Has Been Costing Manufacturers Billions

Here is the core problem: when engineers program a robot in a simulated environment, the real world does not cooperate. Light hits surfaces differently. Parts have microscopic variations. Conveyor belts vibrate. Materials flex. None of that messy physics exists in a clean digital training model, which means the robot that worked perfectly in simulation often needs weeks — sometimes months — of painful re-tuning once it is bolted to an actual production line.

This is called the “sim-to-real gap,” and it has quietly drained engineering budgets and delayed product launches across the global manufacturing industry. The workaround has always been expensive: build physical prototypes, test them, break them, rebuild them. That process is slow, wasteful, and increasingly incompatible with how fast consumer demand shifts.

What ABB and NVIDIA Are Actually Building

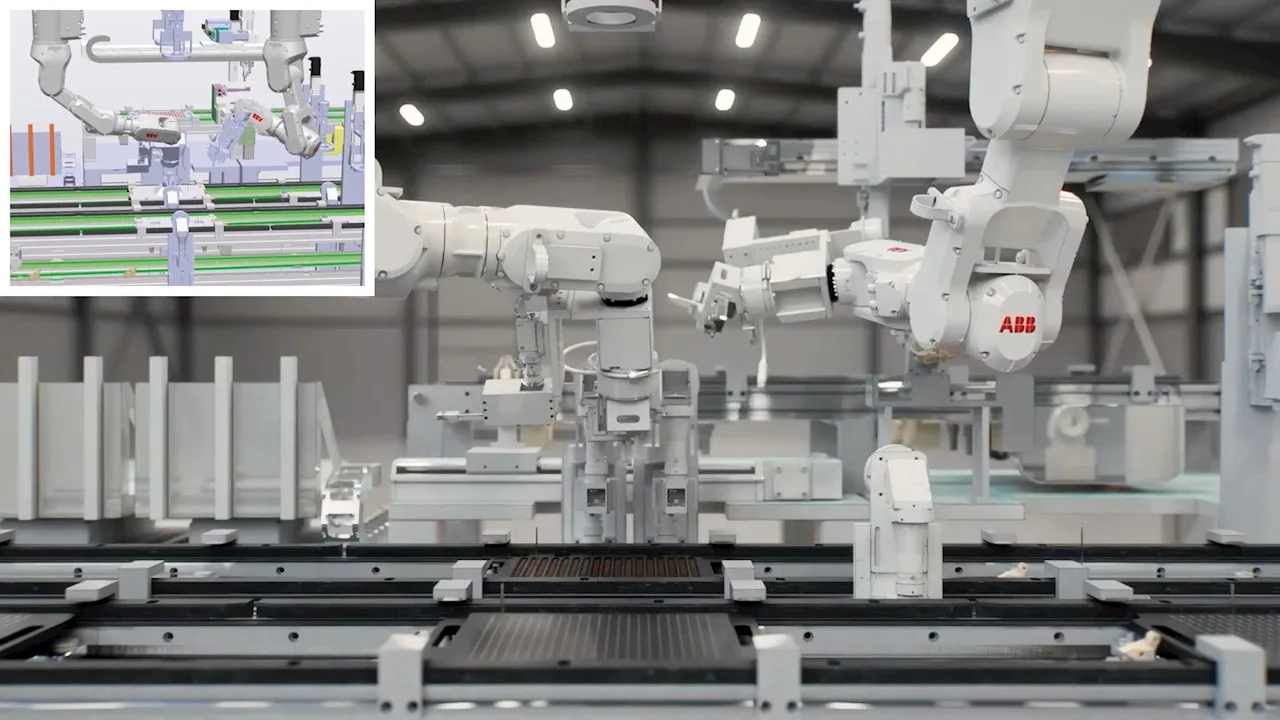

The solution ABB is launching — called RobotStudio HyperReality, expected in late 2026 — embeds NVIDIA’s Omniverse simulation libraries directly into its existing robot programming software. Think of Omniverse as a physics engine so accurate that virtual materials behave the way real materials actually do: metal bends with the right stiffness, light scatters realistically off curved surfaces, and sensors respond to synthetic environments as they would to physical ones.

Inside this environment, engineers can design an entire automation cell — robots, sensors, conveyors, lighting rigs, all of it — and run it through a virtual controller running the exact same firmware as the physical machine. Not a simplified version. The identical software. That precision is what produces what ABB reports as a 99 percent behavioral match between the simulation and the real robot.

The Numbers That Make Finance Teams Pay Attention

Claimed efficiency gains in technology partnerships are often optimistic. But the specifics here are detailed enough to take seriously. ABB reports that this approach can reduce deployment costs by up to 40 percent and accelerate time-to-market by up to 50 percent. The precision improvement is particularly striking: traditional robotic positioning errors of 8 to 15 millimeters get reduced to approximately 0.5 millimeters when synthetic training data replaces manual programming.

For context, a 10-millimeter positioning error is catastrophic in electronics assembly, where components are measured in fractions of a millimeter. Closing that gap virtually — before a single bolt is tightened on a real machine — fundamentally changes the economics of automation investment.

| Metric | Traditional Approach | With HyperReality Simulation |

|---|---|---|

| Positioning Error | 8–15 mm | ~0.5 mm |

| Deployment Cost | Baseline | Up to 40% reduction |

| Time to Market | Baseline | Up to 50% faster |

| Sim-to-Real Behavioral Match | Highly variable | ~99% match |

| Programming Requirement | Specialist engineers | Reduced — vision models trained on synthetic data |

| Physical Prototype Testing | Required and costly | Largely eliminated |

Foxconn Is Already Testing This — and That Matters

Abstract partnerships become real when major manufacturers start betting production lines on them. Foxconn — the company that assembles a significant portion of the world’s consumer electronics — is already running trials of this system for device assembly. That is not a trivial test case. Consumer electronics assembly involves constant product changes, delicate components, and extremely tight tolerances. It is one of the hardest categories to automate reliably.

The fact that Foxconn is using synthetic training data generated entirely within the virtual environment to achieve production-grade accuracy is meaningful. It suggests the approach is not just theoretically sound — it is operationally viable under pressure.

A California Startup Is Making It Accessible Without Specialists

Equally interesting is what a smaller company called Workr is doing with this technology. The California-based automation provider has integrated its WorkrCore platform with ABB hardware trained through Omniverse, and it claims to be able to onboard new parts — teaching the robot what a new object looks and feels like — in minutes rather than days, without requiring specialized programming expertise.

This matters enormously for small and mid-sized manufacturers who cannot afford dedicated robotics engineers. If the barrier to deploying a new robotic task shrinks from weeks of specialist labor to minutes of guided setup, the economics of automation change for a much broader market. That is a meaningful shift in who physical AI is actually accessible to.

Where This Fits in the Bigger Story of Physical AI

What ABB and NVIDIA are doing is part of a much larger movement that the industry is calling “physical AI” — artificial intelligence that does not just process text or images, but understands and interacts with the three-dimensional physical world. Unlike language models that operate in the abstract space of words and patterns, physical AI must contend with gravity, friction, material fatigue, and the relentless unpredictability of real environments.

Solving the sim-to-real gap is not just a robotics problem. It is a prerequisite for physical AI to scale. Every warehouse robot, every surgical assistant, every autonomous vehicle, every inspection drone faces the same fundamental challenge: training happens in simulation, but deployment happens in reality. The more faithfully simulation mirrors reality, the faster physical AI can be trusted with high-stakes tasks. What ABB has built is, in effect, infrastructure for the entire physical AI ecosystem.

What the Next 12 to 24 Months Likely Look Like

With RobotStudio HyperReality scheduled for release in the second half of 2026, the next phase will be watching whether these gains hold across diverse manufacturing environments — not just Foxconn’s tightly controlled facilities, but the messier, more variable conditions of mid-tier suppliers and contract manufacturers around the world.

If the 99 percent behavioral match claim proves durable across different industries, expect competitors to accelerate their own simulation platforms rapidly. The companies that own high-fidelity simulation infrastructure will have a structural advantage in every physical AI conversation going forward. NVIDIA’s Omniverse is already positioning itself as the industry-standard physics engine for this reason — this ABB partnership is one of the clearest signals yet that it is winning that positioning battle.

If you work in manufacturing, supply chain, or industrial operations — or if you invest in companies that do — this development deserves your close attention. The way factories get built, programmed, and operated is being redesigned right now, not in some distant future. I will be tracking how the first commercial deployments of HyperReality perform once real-world data starts coming in. The gap between simulation and reality has always been where expensive promises go to die. This time, the architecture looks genuinely different.